How to Universally Screen Students Efficiently

- November 7, 2023

- Blog

3 Minutes of Learning

If you have about 3 and a half minutes, check out this math interview with a kindergartner and see how much you learn about this student with this universal screening tool.

In a few minutes, teachers can gain a wealth of information about a learner, including starting places for instruction and an understanding of the child's strengths. If using these interviews for intervention groupings, teachers will be able to develop groupings based on those students identified as at-risk or more flexible groupings based on needs around numeral identification, the counting sequence, skip counting, place value, or operations and algebraic thinking.

In the few minutes it takes to sit down with young learners and present them with tasks, we share with them so many important values:

-

- We share that we value them as learners, and we care to understand what they know.

- We share with them that we value early numeracy and the specific concepts and skills we find important at that time of year in their grade.

- We share that we value the process of assessment, and how it is important for our students to reserve time to demonstrate understanding.

In this way, this fulfills the primary purpose of universal screening -- identify students at-risk of poor learning outcomes -- in a short period of time. It also achieves additional purposes, such as informing instructional responses. But perhaps most importantly, teachers devote time to build the student-teacher relationship and communicate values with students.

You can learn a lot about a student in just 3 minutes.

Universal Screening

According to the National Center for Intensive Interventions and the Massachusett's Department of Education, universal screening's primary purpose is to help identify those students at risk for poor learning outcomes. Universal screening assessments are typically brief, reliable, and valid assessments conducted with all students from a grade level. Read more about our own Universal Screeners for Number Sense's (USNS) validity and reliability here.

Although universal screening's primary purpose is to identify areas of struggle and students at-risk of not meeting grade-level expectations, assessments fulfill may purposes. In this article, we provide an overview of assessment types from state tests to classroom formative assessments and their purposes. There are overlapping purposes, for example, summative assessments can be used formatively to guide instruction and interim/benchmark assessments are often used as screening tools.

When considering the question of efficiency then, we have to ask: how quickly is this assessment tool helping me with it's intended purpose? Are there secondary purposes that the assessment tool provides that help me do my work better and more efficiently?

Multiple Purposes of Assessments

When considering universal screening for your schools, think of each of the potential uses of assessments. Efficient assessment systems will enable you to utilize the results effectively for multiple purposes.

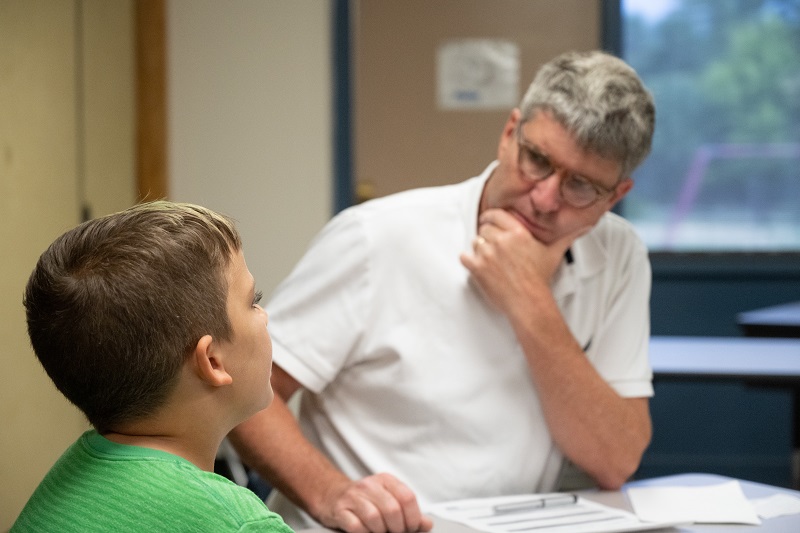

The Universal Screeners for Number Sense (USNS) assessments are interview-based assessments, meaning that teachers sit with students one-on-one to assess students. Teachers can use the assessment results for the following purposes:

Formative Information (for Feedback):

Effective feedback is goal referenced, transparent, actionable, user-friendly, timely. By using the USNS assessments, teachers are able to directly witness student behaviors in ways that make meaningful feedback possible. Consider these examples:

“I noticed that you are having trouble counting through the teen numbers and sometimes you skip thirteen. Practice counting all this week, and let’s see if you can count better by Friday.”

or

“When you are counting objects, you need to say one number each time you touch an object. Sometimes you count faster than you are touching the blocks. Slow down and practice moving the objects one at a time while you say the numbers.”

Compare this with digital, computer-based, screening assessments and the data they provide. The lack of specificity that a computer can provide makes truly effective feedback limited at best. Teachers have to use other assessment opportunities to gather information and to provide feedback.

Formative Information (for Instructional Planning)

When teachers have collected valuable assessment information directly from students, the instructional next steps are easy. One of the things that we tell teachers during professional learning to use the assessments is to refrain from teaching while assessing. What teachers learn through the USNS interviews almost compels them to teach. Compare this to data from digital assessments, where instructional responses are hard to identify.

Because the learning goals that one is assessing are also fully transparent, this empowers teachers to quickly identify goals for instruction, intervention, and small group work.

Assessment as Communication

How many times over the pandemic have we found that the quality of communication suffered in the shift to remote forms? Virtual meetings, emails, and chats offer quicker, more convenient forms of communication, but this is not efficiency. Sometimes, an in-person meeting, even with the time it takes to get into the same room, provide a quality of communication unsurpassed by the various methods of remote communication tools.

It is the same with assessments. Assessments are, first and foremost, a method of communication. We use assessments to enable students to communicate what they know and can do. There are many reasons educators need this information: to provide formative information to guide instruction, for grades, to monitor the effectiveness of their instruction, to support family communication, and much more. This communication is critical for the work of teachers, schools, and school districts.

There has been a massive effort over the past 2 decades to improve online assessments. Having students sit in front of computers to have assessments automatically scored has been seen as a way to save people time and to collect data seamlessly, but the process of sitting beside our students to listen to them, observe them, and learn about what they can and can't do can not yet be replicated by computer-based assessments. Teaching is a human-centered profession and assessment remains the cornerstone of how we communicate and listen to our students.

Valid Universal Screening

Digital assessments are getting more and more sophisticated everyday, but they present a challenge for young learners that are still learning to manipulate a mouse, type on a keyboard, or are not yet developmentally ready for computer-based assessments.

To gather more valid results from digital assessments for young learners, teachers and other support staff have to provide 1:1 assistance. The "efficiency" of a whole-class taking a computer-adaptive test in lower elementary is more complex than sending a class with one teacher to the computer lab. Additional support staff need to be on-hand to make sure that the results that are captured are valid pictures of students' understanding and not the results of young children clicking randomly through computer-based assessments. We explore further the costs of digital assessments in this recent article.

Back to Basics vs. Digital Assessments

Online assessments are still very limited in what kinds of information they can collect. This issue is acute in the primary years. Critical skills like being able to count verbally and to count sets are not easily assessed on a computer. The ability to read and write numerals is impossible to assess. In the kindergarten to grade 2 years students apply a wide variety of strategies for solving problems. Knowing how a student solved a problem, not just whether their answer is correct and incorrect, is critical information for effectively supporting students in their development.

Computers make the collection of assessment data quick and painless for teachers, but we lose so much information when we utilize computers to collect that information for us. In the attempts to make things more efficient, we learn less about what students know and can do. In the primary years, this is particularly problematic. The computer itself becomes like a filter. At best, students attempt to communicate what they know about mathematics through a keyboard, mouse, and screen. And teachers, with good reason, look at the results from these assessments with skepticism for younger learners:

-

- Do these results really reflect what students know?

- Did the students focus?

- Did they rush through the assessment?

- Did they understand the problems correctly?

- Did they guess?

When teachers do not trust the results of an assessment, the time devoted to that test is lost time.

The Universal Screeners for Number Sense (USNS)

The Universal Screeners for Number Sense take a very different view on universal screening. These assessments include interviews with students. By interviewing students you learn a lot more than whether they can simply get answers correct. You learn about their thinking, you learn about their confidence, and anxieties. Most importantly, you learn about what they do know, even when their answers might not be correct the first time. Through working directly with students teachers gather deep, important information directly from students. Learn more about the USNS Project and download the K-6 assessment guide here.

Conclusion

When using digital assessments, teachers may feel they receive broad stroke information about overall student performance. Certainly, sending kids to the computer lab for 20 minutes is an efficient way to gather learning data? At best, this information simply confirms what teachers already know without providing diagnostic-level information to inform next steps. The questions on these assessments are unknown, leaving teachers with imprecise information about where students performed well and where to offer additional supports.

At worst, and particularly for the youngest learners, teachers and support staff devote significant resources to improve the validity of their results, having support staff work 1:1 with students during assessment windows. At the same time, they do not provide opportunities to gather data about the most important, foundational skills that cannot yet be assessed well digitally. In all scenarios, even with beautiful, color-coded reports, student thinking and problem-solving strategies are hidden and learning is reduced to a percentile score.

When considering how to universally screen students efficiently, consider how much can be learned about students in just 3-5 minutes. The time that teachers have to spend assessing kids is evident, but the rich information gathered about what students can and cannot do saves teachers time. With interviews, teachers directly receive data for feedback and to inform instruction. They do not have to rely on additional assessment tools to provide this information. Even without the support of Forefront or other assessment data solutions to help educators visualize and interpret results, these assessments lead directly to instructional responses. In this way, assessment as communication can flow naturally and powerfully in your classrooms when high-quality assessment tools are in place.

About us and this blog

Our team and tools help schools implement standards-based grading, streamline assessment systems, and use meaningful data to drive decision-making.

USNS Validation Summary

Read the entire summary of this external review of the USNS by Jonathan Bostic, PhD., & Timothy Folger, MEd. (2023)

More from our blog

See all postsForefront is the only assessment data solution optimized for classroom assessment results, leveraging these results to fuel instruction, PLCs, and grading. Elevate meaningful assessment data district-wide to transform how you understand and communicate about student learning across your schools.

Copyright © 2025 Forefront Education, Inc. All Rights Reserved.